The user asked both workers.

"The map does not feel like Find My or Life360. What can you find? No changes, just responses."

Case study

This example is based on a real product session: a builder wanted to know why a location-sharing map did not feel as alive as Find My or Life360.

"The map does not feel like Find My or Life360. What can you find? No changes, just responses."

Claude focused on the feel: pin complexity, visual restraint, place clustering, and the difference between a calm map and a busy status surface.

Codex focused on update cadence, prediction, battery tradeoffs, sync behavior, and why a map can look smooth but still feel stale.

Verdict: the product was optimized as a battery-friendly family status map, not a literal live-dot tracker.

Recommendation: add a distinct live mode instead of replacing the settled map. That gives immediacy when someone is being watched while keeping the current battery story intact.

The important part

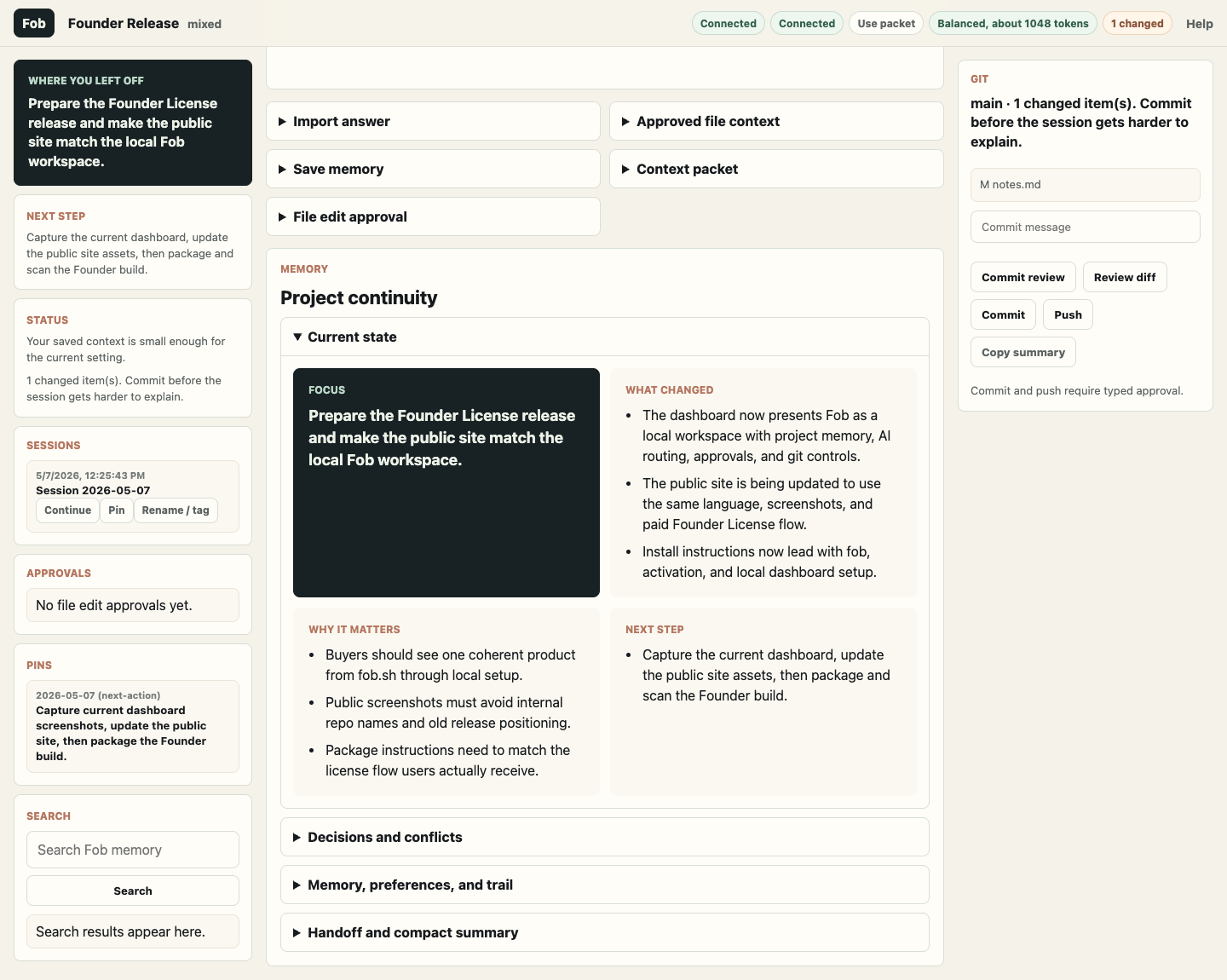

What this looked like in Fob

Proof point

Without Fob, the user has a long answer and has to decide what to do next. With Fob, the answer becomes saved context, a decision candidate, a follow-up path, and a handoff for the next AI tool.